Michael Bennie (second from left) next to Lina Reiss, Ph.D., and the summer lab team at Oregon Hearing & Science University.

By Michael Bennie

Before the summer of 2025, I was mostly focused on using computational methods for analyzing human written text, but I hadn’t yet experienced what it feels like to design and run a human subjects study in hearing science.

That changed while working in Dr. Lina Reiss’s lab as part of SURIEA (the Summer Undergraduate Research or Internship Experience in Acoustics), a program organized by the Acoustical Society of America and my experience was sponsored by Hearing Health Foundation. It provided my first real chance to step into hearing science and learn the experimental side of speech perception under the tutelage of a senior researcher.

Joining Dr. Reiss’s Cochlear Implant and Hearing Aid research lab meant learning practical audiology skills (like conducting audiograms, using tympanometry to check middle-ear status, and running sound balancing tests) as well as soft skills (like email formatting, participant recruitment, and lab etiquette).

The lab went on a hiking trip in Oregon’s mountains.

Despite the imposing manner and high expectations that most senior scientists naturally emanate, the inclusive and supportive environment found while working with Drs. Reiss and Michelle Molis slowly made me more confident to make mistakes and learn more as a researcher, and, more importantly, allowed me to play around with audio stimuli and see how lab members reacted in real time. That shift from thinking about spoken language as abstract data to seeing it as perception unfolding in real time was a fundamental reason this program mattered to me.

The project I worked on focused on dichotic vowel fusion, a phenomenon where two different vowels played to opposite ears can be heard as one fused vowel. For some people, especially those with hearing loss, when they hear close sounding words in both ears (like “big” and “bag”), they may actually process it as one single, fused average (like “beg”). It may seem like a niche auditory trick, but it actually serves as a direct window into how vowels, consonants, and other lower level sound cues interact with higher level lexical knowledge.

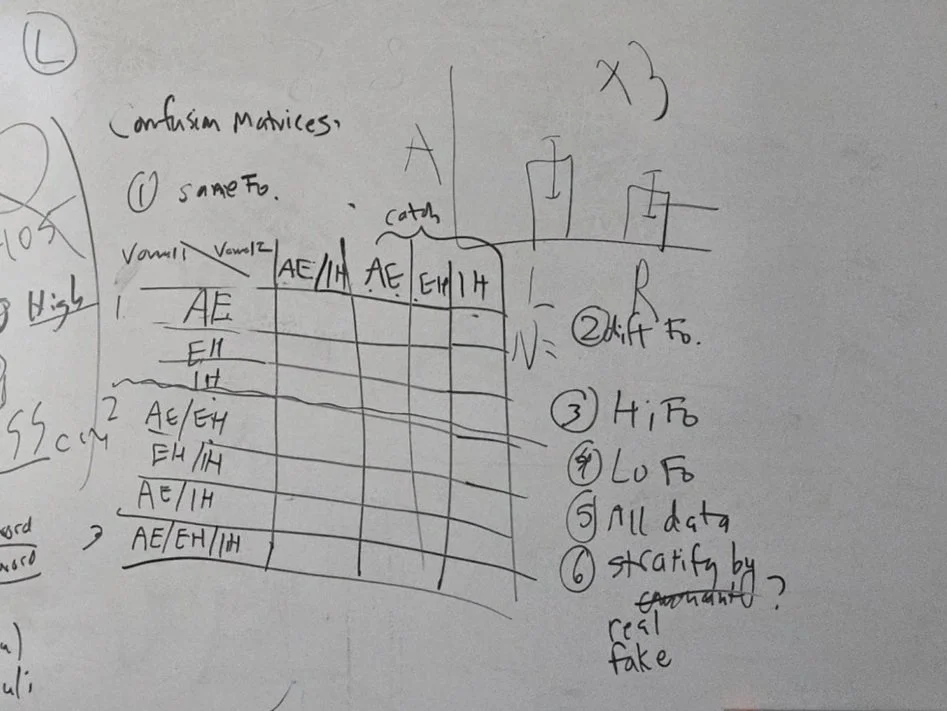

For this study, we used pairs of words with two consonants and a vowel in the middle where the consonants matched but the vowels differed (e.g., /IH/ versus /AE/) and were split across ears, creating situations where the listener might report a third percept (often /EH/).

My role spanned the full pipeline: generating and validating the stimuli, testing participants, and performing data analysis. I built a workflow for formant-controlled stimulus generation, making sure vowel duration, intensity, and pitch characteristics were tightly controlled so we could interpret perceptual changes as meaningful rather than accidental artifacts.

Michael took this photo of the whiteboard after brainstorming with Drs. Molis and Reiss on how to display the experiment results.

It was very satisfying when both typical hearing lab members and those with hearing loss were able to tell apart the words when they only used one side of the headphone, but merged the sounds together when hearing both at the same time.

On the human side, experienced lab members (like Holden and Nick) taught me to administer basic hearing tests and recruit participants. Then I ran the listening sessions themselves, troubleshooting everything from headphone cables coming loose to response confusion. Seeing the phenomenon appear in real participants unfamiliar with this phenomenon made the weeks of sitting on the other side of the sound booth silently watching their responses and checking to see if any issues arrived feel consequential and rewarding.

This was all to test a question that, surprisingly, hadn’t been explored much in this area: Does lexical frequency bias what people hear when the acoustics are ambiguous? We manipulated lexical frequency by choosing consonant contexts where the heard words (with /IH/ and /AE/) and the possible fused candidate (with /EH/) varied from common to rare in spoken English.

Participants heard the dichotic word pair and responded using a forced-choice interface that included three word options and three vowel-only options, which let us capture both complete fusion and partial fusion patterns.

The results supported the idea that fusion isn’t just passive “spectrum averaging.” Lexical condition significantly influenced the rate of hearing an averaged sound. Moreover, in some trials listeners selected multiple responses which suggests active perceptual reconstruction beyond a simple acoustic midpoint.

The Multnomah County Library’s foreign language section, where Michael spent several days a week reading comic books in Mandarin.

For me, that was the most exciting part: You could see lexical knowledge shaping the percept, not as a vague post-hoc explanation, but as a measurable bias in what listeners reported hearing.

Personally, SURIEA is the experience that turned my curiosity about hearing and word recognition into a concrete Ph.D. direction. I went in with the previous research experience of being “the coding person,” and I came out wanting to build research programs that combine experimental psycholinguistics, hearing science, and computational modeling for questions about how listeners recognize words under difficult listening conditions. I want to keep asking how top-down lexical structure biases percepts, how hearing conditions reshape those biases, and how computational models can capture the dynamics of speech recognition as it actually happens for diverse listeners.

Michael Bennie will graduate from the University of Florida this spring with a bachelor of science in computer science. He has also been studying Mandarin Chinese for seven years. Lina Reiss, Ph.D., is a 2012–2013 Emerging Research Grants scientist and a professor of otolaryngology–head and neck surgery at the Oregon Health & Science University School of Medicine. The team submitted an abstract based on the SURIEA project results to the 2026 Acoustical Society of America conference, to be held in Philadelphia in May 2026.

My advice for anyone starting this journey is to make sure that you know your limits so you don’t get into a situation that you can’t handle. Plus, make sure the people you’re interacting with know what you’re going through so they can help.